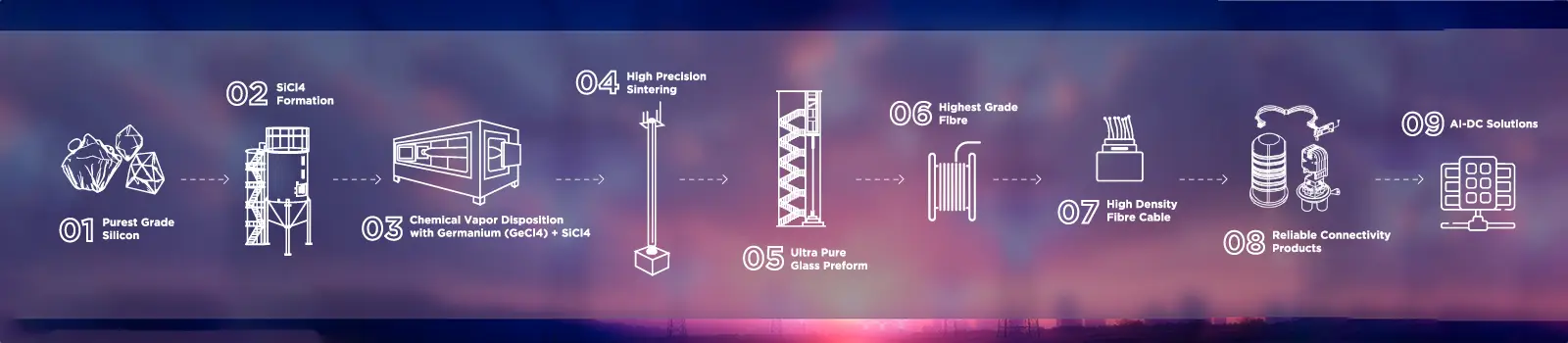

Leverage the power of STL’s end-to-end vertical integration. From glass preform and fiber drawing to advanced cable production and pre-terminated solution.

STL Neuralis draws its name from the intelligent, interconnected pathways of a neural network, symbolizing seamless connectivity and speed.

Acting as the central nervous system for Data Center infrastructure, STL Neuralis Data Center portfolio provides the robust, high-performance foundation necessary to process, scale, and power the most demanding Data Center workloads.

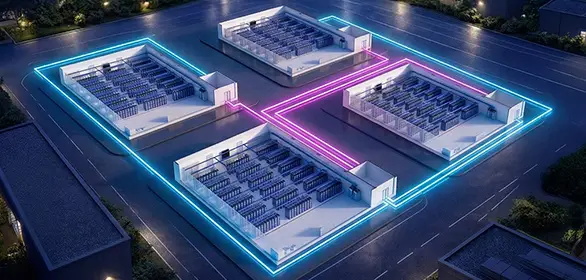

Data Center Interconnect (DCI) is the high-speed optical backbone that links data center buildings to one another - enabling the seamless, low-latency flow of data across campuses, metros, and regions that modern cloud and AI workloads demand. STL brings DCI expertise helping operators build interconnect infrastructure that scales efficiently, meets the strictest global standards, and keeps pace with the accelerating demands of AI-era data centers.

Data Center Whitespace refers to the purpose-built space with a data center facility where critical IT infrastructure - relating to compute, storage, and networking - is deployed alongside the power and cooling systems that sustain it. STL enables organisations to maximise whitespace utilisation, reduce operational complexity, and build data center environments that scale seamlessly as business demands evolve.

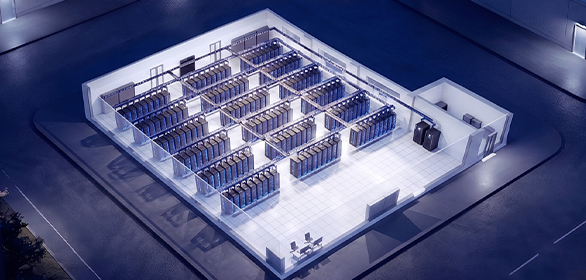

The most demanding fibre environments ever built. GPU clusters require optical port counts that overwhelm traditional architectures. STL’s hyperscale portfolio is engineered for this reality.

Design Importance: A single AI GPU building can exceed 6,000 optical connections. Every duct pull must be specified for hardware not yet designed.

Infrastructure serving multiple tenants with different architectures, at unpredictable growth rates, with zero tolerance for service interruption.

Operational Importance : Every tenant onboarded without a cable pull is direct margin. Dark fibre reserve makes it a software event, not a construction event.

Constrained budgets, mixed-vendor environments, fire-rating compliance, and legacy infrastructure running alongside new high-density deployments in the same rack.

Migration Importance : Run 10G legacy and 400G simultaneously. STL’s multi-base enclosures serve both from one chassis, indefinitely.

Purpose-built optical infrastructure for every segment of the data center market — from

the largest hyperscale campuses to enterprise-grade hybrid environments.

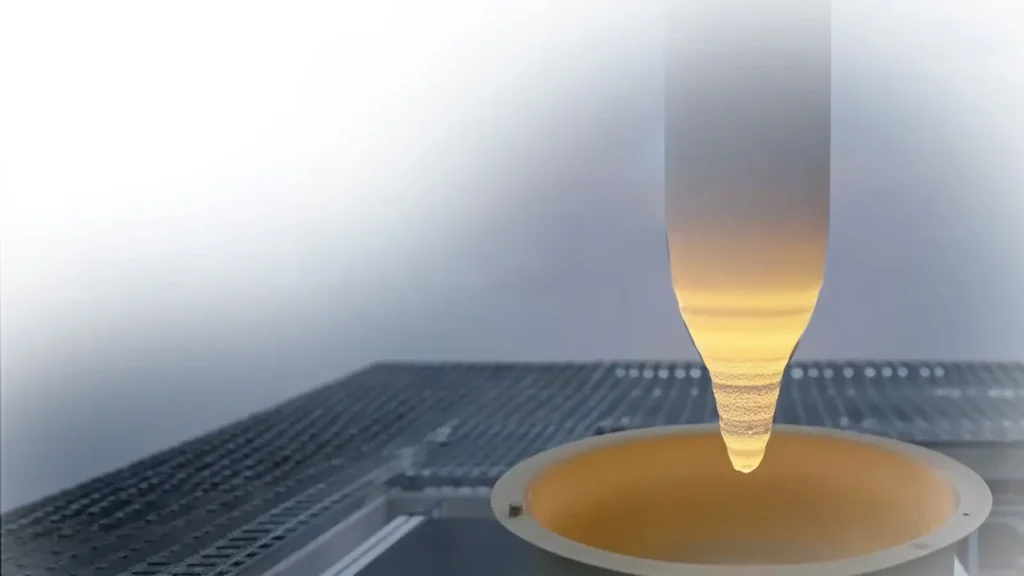

STL manufactures its own glass preforms — the silica cylinders from which all optical fibre is drawn. Owning the base of the supply chain means that when fibre demand spikes — as it did during 5G, during broadband stimulus, and during AI build cycles

This supply chain position also enables us to develop specialty fibres that assemblers simply cannot access: Multi-Core Fibre (MCF), Hollow-Core Fibre (HCF), bend-optimised 200µm and 165µm formats — the fibre technologies the next generation of AI interconnect will be built on – Supply chain security is a data centre infrastructure requirement. STL owns the supply chain.

Most fibre vendors will configure a cable from a catalogue. STL will co-engineer a solution with you — and the outputs are real: field-qualified, manufacturable, and delivered on your project timeline, not ours.

A recent example: STL’s Pink Slic® fibre — a bend-optimised, ultra-low-loss optical fibre co-developed with network operators for ultra-high-density deployment environments where standard fibre geometry creates routing constraints. This is the output of genuine co-creation — operator challenge with STL’s PhD-led R&D team that turns engineering challenges into deployable outcomes.

30 years of industrial-scale fibre manufacturing. The most stress-tested supply chain in the industry. STL has been the fibre backbone for telecom operators across Asia, North America, and Europe for 30+ years — through 5G, broadband build-outs, and the first hyperscale wave. We don’t position for cycles. We deliver through them.

That depth means our manufacturing quality systems have been tested at industrial scale across the world’s most demanding networks. Every data centre cable ships to the same telecom-grade standards — across five cable facilities, four fibre facilities, four connectivity facilities.

A single AI cluster can need 50,000–100,000 optical connections.The transition to 400G and 800G means higher-speed transceivers now consume more fibres per port, not fewer. And every campus cable buried today must carry traffic from GPU hardware that has not yet shipped. The only rational specification is maximum fibre count, with a partner who can deliver it.

Full MMC 12F, 16F, 24F range. Up to 3456F per 1U — co-developed with hyperscale operators for GPU fabric.

Celesta 6912F serves a full GPU compute building in one duct pull — with 62% of duct space still free.

Glass preform ownership and multi-continent manufacturing insulate delivery from supply shocks that disrupted other vendors during AI build cycles.

AI topologies are non-standard. STL’s PhD-led R&D team co-engineers fibre counts, connectors, and configurations for your specific fabric — not a catalogue product forced into an approximation.

Pre-terminated trunks and enclosures qualified for 400G and 800G transceiver insertion loss budgets. I/O cable eliminates the greyspace splice — recovering 0.5–1.5dB of optical budget exactly where AI link margins are tightest.

STL manufactures Multi-Core Fibre (MCF) and Hollow-Core Fibre (HCF) — the fibre technologies being evaluated for next-generation AI interconnect architectures. When the industry transitions, our customers are already partnered with the manufacturer leading it.

STL manufactures its own glass preforms; these are the glass cylinders from which all optical fiber is drawn. Owning the base of the supply chain is important, especially during times when fiber demand spikes.

This fiber independence enables STL to develop specialty fibers as we did with Multi-Core fiber (MCF), bend-optimised 200µm, 180 & 160µm formats ; these are the fiber technologies the next generation of AI GPU connectivity will be built on.

With 30 years of industrial-scale fiber manufacturing, STL has been the fiber backbone for network builders across Asia, North America, and Europe.

No two data centers are same and therefore STL cocreates solutions which are tailor-made for your data center build.

End-to-end FTTH solutions from advanced optical fibre cables to high-performance connectivity to speed rollout and lower total cost of ownership

During the trial, STL’s Multiverse™ Multi-Core 4-core Fibre.

With in-house expertise in glass science, material science, precision manufacturing, big picture understanding of network architectures, deep understanding of networking deployment and operations, STL brings complete control and predictability across digital connectivity value chain.

STL’s Celesta IBR Cable combines robust performance for duct installations with the productivity of high-count mass fusion splicing.

QNu Labs, incubated at IIT Madras Research Park in 2016 and backed by the National Quantum Mission.

STL a leading connectivity solutions provider for AI-ready digital infrastructure, today announced a significant..

QNu Labs, incubated at IIT Madras Research Park in 2016 and backed by the National Quantum Mission.

AI clusters can need 50,000–100,000 optical connections — exceeding entire legacy campus plants. At 400G/800G, higher-speed transceivers consume more fibres per port. Every cable buried today must serve GPU hardware not yet designed. Conservative fibre count specification is finished.

The 2020–2023 shortage cycle exposed every vendor without upstream manufacturing ownership. Preform supply became a bottleneck that cascaded through resellers simultaneously. For multi-phase builds, raw material resilience is now a tier-1 specification requirement.

The gap between specifying campus cable and first 800G deployment is typically 3–5 years. By 1.6T, the cable plant buried today is the only physical layer available. Specify for hardware not yet announced — with dark fibre reserve for two or three future transceiver generations.

Campus duct cannot be meaningfully expanded once a campus is operational. The fibre count at the initial pull is the highest-leverage specification decision in any build. A 6912F cable in a 110mm duct occupies ~38% of cross-section — leaving 62% free. Lowest total cost of ownership for any route used beyond Year 5.

MMC delivers 3,456 fibres per 1U — versus 576 from a 72-port MPO-8 panel. For 51.2Tbps spine switches, that is 1U versus 6U of patch field for the same switch. The VSFF transition is already underway on AI-first builds and requires ecosystems supporting both formats during migration.

Catalogue products serve median use cases. Hyperscale architectures rarely are median. Forcing catalogue products into non-standard topologies produces insertion loss failures, compliance issues, or routing constraints requiring costly workarounds. Co-creation — engineering bespoke to field-qualified product — is what separates tier-1 infrastructure partners

A data center is a facility housing the compute, networking, storage, and power infrastructure that processes and transmits data at scale. Every cloud service, AI application, and enterprise system runs in one. They range from single-room server rooms to multi-building campuses consuming hundreds of megawatts — all dependent on physical fiber connectivity.

Modern applications require compute and networking beyond what any single building can provide. With AI, the need has grown dramatically — LLM training requires GPU-dense clusters at densities previous generations could not anticipate. The data center is the operational core of the global economy.

Enterprise / Private — Single-organisation facilities serving internal applications and private cloud. Typically a few hundred kilowatts to a few megawatts.

Colocation — Multiple organisations rent space and power; the operator owns the building, tenants manage their own equipment.

Hyperscale — 100MW+ facilities operated by the world's largest technology companies, pushing the frontier of what connectivity infrastructure can deliver.

Neocloud / MTDC — Hyperscale-density multi-tenant facilities selling capacity to enterprise and AI customers.

Hyperscalers are the companies which own massive, global networks of very large data centers. They provide computing power that scales as per the requirement of their customers. Their main strength is their massive size and the ability to offer hundreds of different services under one roof.

A neocloud is a specialized, next-generation cloud provider focused exclusively on providing GPU-as-a-Service (GPUaaS) to handle massive AI, machine learning, and data-intensive workloads. Neoclouds claim to offer faster access to high-performance GPU infrastructure at optimized costs.

Enterprises are large, established organizations, from banks, manufacturing organizations, service firms, airlines and other industries that use technology to run their business operations. They focus on security, stability, and integrating new tech with their existing legacy systems.

Your input is valuable to us. Fill out the form below and we’ll get in touch as soon as possible.

Please wait while you are redirected to the right page...